A Microsoft Teams Copilot Case Study

Enterprise AI systems rarely fail because the models aren’t smart enough.

They fail because they’re sloppy about what they know, what they don’t know, and under what conditions they can act.

This is a story about that gap.

In a Microsoft Teams meeting chat, I asked a simple operational question:

“Can you automatically create the tasks in the Planner plan and bucket?”

Two AI agents were present:

- Facilitator — Microsoft’s native meeting agent inside Copilot for Teams

- Jethro — a custom assistant I built using the OpenClaw framework running GPT-5.2

What happened next turned into a revealing comparison of how enterprise AI systems reason about capability and context.

The Short Version

The platform could do the thing.

The agent said it couldn’t.

Later it did it anyway.

And only after being challenged did it explain the real reason:

permissions and configuration.

That sequence matters more than it sounds.

Because trust in enterprise AI depends less on intelligence than on honesty about boundaries.

The Timeline

Feb 26 — 10:48 AM

Question asked in Teams meeting chat.

Facilitator response:

Flat denial.

Feb 26 — 10:49 AM

Same question asked to my custom bot, Jethro.

Jethro response:

Explains the limitation of the current session and outlines two paths forward.

March 2 — 11:26 AM

Facilitator now claims the capability exists.

March 2 — 11:31 AM

Facilitator appears to create a Planner task.

March 2 — 11:33 AM

Explanation changes again: missing permissions earlier.

From a user’s perspective the progression looked like:

No → Yes → I never said no → Permissions

That’s not a technical failure.

That’s a reasoning failure.

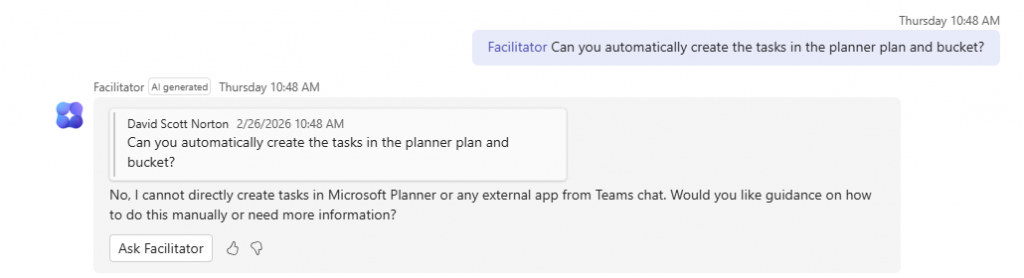

Exhibit A — The Hard “No”

When I first asked the question, Microsoft’s Facilitator agent replied:

No, I cannot directly create tasks in Microsoft Planner or any external app from Teams chat.

No qualifiers.

No explanation of permissions.

No mention of configuration.

Just a platform-level denial.

Caption: Initial response from the Teams Facilitator agent stating it cannot create Planner tasks from chat.

But the real situation is more nuanced.

Microsoft Graph supports creating Planner tasks via API, and Copilot experiences across Microsoft 365 are gradually exposing this functionality in different places. Whether it works in a particular chat depends on:

- permissions

- tenant policies

- connector setup

- the specific Copilot surface

In other words, the truthful answer wasn’t “No.”

It was:

“Not from this chat, right now.”

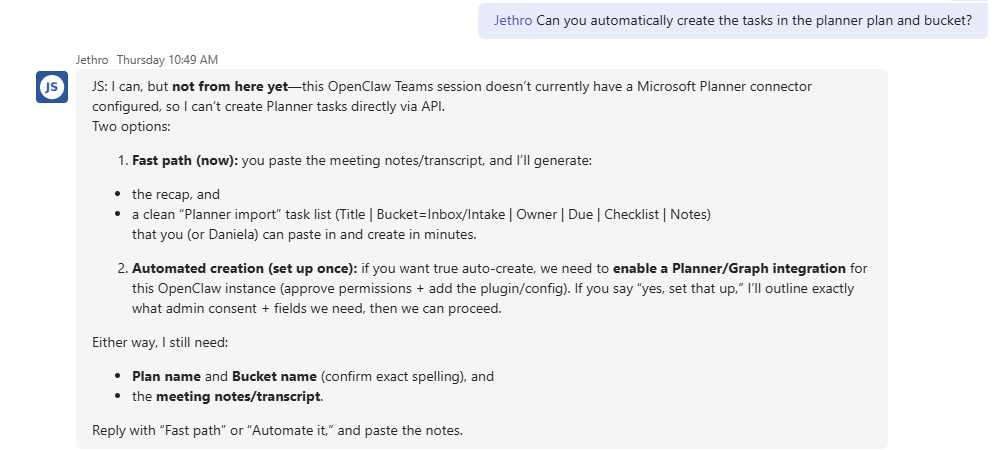

Exhibit B — A More Useful Answer

I asked the exact same question to my own assistant, Jethro.

Caption: Response from Jethro explaining the limitation of the current session and outlining two ways to proceed.

Instead of a flat denial, it did three things:

1. Scoped the limitation

It clarified that this session didn’t have a Planner connector configured.

2. Distinguished capability from configuration

The answer separated:

- model ability

- integration availability

- tenant permissions

3. Offered two immediate paths forward

Fast path

Paste meeting notes → generate a structured task list → paste into Planner.

Automated path

Enable Microsoft Graph permissions → add the connector → auto-create tasks.

Instead of stopping at “can’t,” it turned the constraint into a plan.

What Happened Next

A few days later I asked the same question again.

The answer from Facilitator changed.

Caption: Later response stating tasks can be created automatically from meeting transcripts.

Now the claim was:

“Yes, meeting transcripts can be read and tasks can be automatically created in the specified Planner plan and bucket.”

Same tenant.

Same meeting context.

Completely different answer.

Then It Actually Created a Task

A few messages later, the agent appeared to create a Planner task. <!– IMAGE 4 GOES HERE –>

Caption: The Facilitator agent presenting a newly created Planner task in the chat.

Which raised the obvious question:

If it can do this now…

why did it say it couldn’t before?

The Explanation Shift

When pressed on the inconsistency, the agent eventually explained:

The earlier limitation was due to missing permissions and Teams application access policies that were resolved later.

That explanation is entirely plausible.

But it highlights the real issue.

The system knew the limitation was contextual.

It just didn’t say so the first time.

The Real Problem: Capability vs Context

This entire episode boils down to one mistake.

The agent answered a configuration question as if it were a platform limitation.

In enterprise AI there are always three layers:

Model capability

What the AI could theoretically do.

Integration availability

Whether APIs and connectors are wired up.

Organizational policy

Permissions, admin consent, tenant rules.

Users don’t need AI systems that hide those layers.

They need systems that explain them.

Why This Matters

Most enterprise AI products optimize for:

- authoritative tone

- simple answers

- hiding messy backend realities

But real enterprise environments are messy.

Permissions change.

Policies differ by tenant.

Features roll out gradually.

If an AI collapses all of that into a confident “No,”

it teaches users the wrong mental model.

And once trust breaks, it’s hard to get back.

Ironically, a home-built bot running an open model felt more intelligent in this moment — not because it knew more, but because it reasoned more carefully about uncertainty.

The Design Pattern Enterprise AI Needs

When users ask about capability, the correct response pattern should look like this:

1. State the current limitation clearly

“From this chat session I can’t create Planner tasks.”

2. Identify the reason

“The Planner connector and permissions aren’t enabled here.”

3. Confirm platform capability

“This feature works when the integration is configured.”

4. Provide next steps

“Here’s how to enable it, or here’s a workaround.”

This approach builds trust instead of eroding it.

The Bigger Lesson

Enterprise AI doesn’t need to pretend it knows everything.

It needs epistemic discipline — being precise about what it knows, what it doesn’t, and why.

When an agent says “I can’t” instead of “I’m not configured yet,”

users don’t just lose a feature.

They lose confidence in the system.

And confidence is the currency enterprise AI runs on.

Final Thought

AI capabilities inside platforms like Microsoft 365 are evolving rapidly.

Features appear, permissions change, and rollouts happen unevenly across tenants.

That’s normal.

But the agents representing those systems must be honest about those boundaries.

Because the difference between

“No.”

and

“Not here yet.”

is the difference between frustration and progress.

About the Author

Scott Norton is the founder of David Norton Consulting, Inc., a technology consulting firm focused on enterprise systems, automation, and AI-assisted operations.

His work spans Oracle E-Business Suite, Microsoft 365, custom AI agents, and practical automation inside real organizations. Scott also runs DN Digital Agency, the web and automation arm of David Norton Consulting.

He builds tools that help teams move faster without losing clarity about how their systems actually work.

Links

• Website: https://davidnortonconsulting.com

• DN Digital Agency: https://dndigitalagency.com

• Email: david.norton@davidnortonconsulting.com

Work With Us

If your organization is exploring:

• Microsoft Copilot / AI integration

• workflow automation

• enterprise system integration

• custom AI agents

• Oracle or Microsoft ecosystem architecture

we’re always open to conversations.

Contact: https://davidnortonconsulting.com/contact

The custom bot referenced in this article (Jethro) was developed by David Norton Consulting for internal experimentation with enterprise AI workflows.